Here’s a scenario that may sound all too familiar: The chief executive officer (CEO) catches you in the hallway and tells you about a devastating cyber-attack on a powerplant in Australia she read about on CNET. “I want you to find out who and what attacked that powerplant and make sure we’re protected. There’s an influx of these types of attacks, and we can’t take any chances!”

Instinctively you reply, “I’m on it!” But, after a minute you start questioning yourself: We’re a hospital campus in Canada, so is this attack even relevant to us? Should we even try to protect ourselves against every known attack scenario, and would that be the most efficient way to secure our operations?

That CEO wasn’t entirely wrong. There is a surge in cyberattacks on industrial organizations. We’ve all read the news and seen the stats. The need for better operational technology (OT) cybersecurity has been by and large established and internalized, which has led to more and more organizations hiring dedicated chief information security officers (CISOs), setting up a security operations center (SOC) and hiring cybersecurity experts. These don’t come cheap, and even if you have the budget, there’s an acute shortage in skilled OT security personnel which is nowhere close to being filled; hence the need to optimize OT SOC operations as much as possible.

Risk analysis and management as a method of optimizing security has increased in popularity over the past few years, and rightfully so. As opposed to passive breach prevention systems, which rely exclusively on firewalls and intrusion detection systems (IDSs), risk-based cybersecurity offers a proactive OT security methodology that focuses on the user organization. In the case of an attack, what’s at risk for the user? What impact can the user tolerate? Which threats are most relevant to the user, and which mitigations would be most effective to counter those threats?

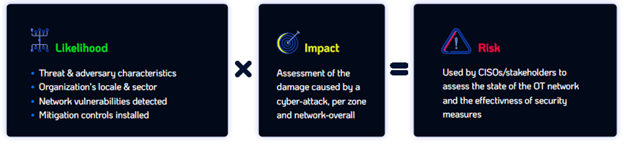

To begin, it’s worth defining OT risk. In simple terms, OT risk is the sum total of the impact of a debilitating attack for each and every OT asset, weighted by the likelihood of an attack.

Likelihood and Impact as Inputs for Breach Simulations

Likelihood and Impact as Inputs for Breach Simulations

There are several ways to assess the likelihood of an attack on an industrial network. The aim is to use a method that is both highly accurate, in terms of representing the specific properties and vulnerabilities of a networked device, and that is non-intrusive/non-destructive. The most reliable method of calculating the likelihood of an attack is through performing a series of numerous breach and attack simulations (BAS), using multiple data sets for:

- Threat and attacker information sources. Threat intelligence, provided by specialized threat research organizations such as MITRE, whose Adversarial Tactics, Techniques and Common Knowledge (ATT&CK) framework provides the attack indicators and MOs of persistent threat (APT) groups. APT feeds from the organization’s security information and event management (SIEM) system.

- Network information. Network specifics are provided using a digital image of the OT network, which is a highly detailed data file describing all network devices and device properties; device-specific vulnerabilities; connections and ports; communication protocols; and any other network characteristics. Digital image-based simulations are, by nature, 100% safe to use, since no simulation action is done on the production network itself (attack simulations done on the network do pose some risk). Since they are deterministic—the properties of industrial networks remain constant unless a change is made to the network—the digital image can be used as an accurate representation of the network for prolonged periods of time.

- Mitigations controls already installed, as well as controls not installed, for drafting a hardening plan.

- Sector and geo-location of the OT network to filter out adversaries and attack tactics that aren’t relevant to the organization’s network.

The impact of a debilitating attack is assessed per Zone. Zones are groupings of business processes in a certain area, such as “Safety” or “HVAC,” as stipulated in the governing IEC 62443 standard. Determining and quantifying the impact of an attack is usually done in collaboration with the network owner, and includes all types of adverse impacts, including financial loss; damage to equipment; employee and visitor safety; loss of compliance/certification; loss of reputation, etc.

Key Indicators and Security Insights Derived from Breach and Attack Simulations

The outcomes of the simulation/assessment phase are twofold:

- Visibility. For all intents and purposes, a detailed risk assessment is the only way for OT network owners to gain visibility into their overall risk, which Zones/processes face the most risk, their threat environment and threat level, device and protocol vulnerabilities, and how far they are from optimal protection. The assessment process should deliver different levels of visibility, from high-level key indicators (e.g., overall risk, control level and threat level for executive reporting and auditing) to detailed security reports used for performing actual network hardening.

- Customizable OT security optimization, through prioritization of mitigation measures. You can only optimize your cybersecurity toward a set objective, which may vary among OT organizations. One network owner’s goal may by reducing overall risk, another owner would be demonstrating compliance with IEC 62443 or other security standards, and yet another would be hardening only the most critical industrial process. There’s never a single, universal security objective that covers all OT organizations in all sectors and regions.

Once an optimization objective is defined, the results of the breach and attack simulation are converted into a security plan, which includes a prioritized list of mitigations (within the network owner’s budget) that will bring them closest to their risk-reduction goal (e.g., reducing overall risk). Thus, rather than guesstimating the network’s risk level and relying on an inaccurate, subjective understanding of the network’s risk and how to reduce it, network owners can invest their security budget much more effectively, providing a higher ROI for security expenditure.

The Practicalities of OT Risk Assessment

The frequency, scope, and actual implementation of OT risk assessment depends on the nature of the OT organization’s operations, available resources (including OT security expertise), and sector/criticality.

- Frequency. Any risk assessment will only be true to the time it was performed, and clearly the OT threat environment is anything but constant. Furthermore, new vulnerabilities are introduced by newly installed network assets or other changes to the network. For most organizations, a monthly assessment should suffice, while high risk operations should perform assessments much more frequently using real-time APT feeds from their SIEM system in addition to threat intelligence feeds.

- Overcoming the skill/resource gap. Some OT organizations, depending on their size, sector, exposure to risk, and access to skilled OT professionals may choose to use the services of managed security service providers (MSSPs) for risk assessments, usually as part of a comprehensive OT security package, rather than set up a full-fledged OT-SOC. This model ensures a very high level of service that would be out of reach for many OT organizations.

- Scope and security standard. OT risk can only be assessed for a predefined set of threat types, and determining which threat types are relevant to each user is far from trivial. This is where security frameworks come in handy, as they provide a set of fundamental requirements for threat types. For example, the IEC 62443 standard, which my company’s risk assessment platform adheres to, currently includes seven such requirements for user authentication, activity authentication, data confidentiality, and more (our risk assessment platform also allows users to assess for additional threat types beyond those defined in IEC62443, such as supply chain attacks).

Conclusion

Risk-based OT security—the practice of applying quantitative analysis for determining the optimal security measures toward achieving the organization’s goals—is currently universally-accepted as the most efficient and cost-effective OT security method. OT operators of all types and sizes need to realize that merely “eyeballing” risk is a thing of the past. There is simply no way for any security professional, expert as they may be, to determine the exposure of an industrial automation system to risk, and which mitigation controls would be best at protecting the network. New technologies and methodologies have streamlined the data collection and risk assessment process, making it available to industrial organizations of all types and sizes to optimize their OT security operations.